The Agent Framework Gap: Part 1 - Why R Looks Behind (And Why It Might Not Be)

Hi All,

If you’ve been building with LLMs in 2026, you might have noticed something weird. Python has agent frameworks everywhere. LangChain, AutoGen, CrewAI, LlamaIndex, Agno, Pydantic AI — the list is endless (I did not even mention about OpenClaw/Hermes agent). Search CRAN for “agent framework” in R? You’ll find… almost nothing.

So here’s the question: Is R missing the AI agent revolution? Or is something more interesting happening here?

Let’s dig in.

Python’s Agent Ecosystem

If you’ve been following AI development, you’ve probably seen these names:

- LangChain — The most popular. Think of it as a toolkit for connecting LLMs to tools, memory, and data. Over 90k stars on GitHub.

- AutoGen — Microsoft’s approach to multi-agent systems. You can create “teams” of agents that collaborate on tasks.

- CrewAI — Similar idea, but with role-based agents (researcher, analyst, reviewer).

- LlamaIndex — Focused on connecting agents to your documents and data sources.

These frameworks are mature. They have documentation, community support, and thousands of examples online. If you want to build an agent that searches the web, calls APIs, and writes reports, you can do it in a few lines of code.

Here’s what a typical LangChain agent looks like:

from langchain.agents import initialize_agent, load_tools

from langchain.llms import OpenAI

llm = OpenAI(temperature=0)

tools = load_tools(["serpapi", "llm-math"], llm=llm)

agent = initialize_agent(tools, llm, agent="zero-shot-react-description")

agent.run("What's the weather in Tokyo? Multiply by 2.")

Clean, right? That’s the power of a mature ecosystem.

R’s Current State

Now let’s look at R. The landscape is different, but not empty.

Here’s what exists today:

| Package | What It Does | Status |

|---|---|---|

ellmer |

Chat with LLMs, tool calling | Active development |

tidyllm |

LLM calls in tidy pipelines | Stable |

chattr |

Chat history management | Basic but functional |

mall |

Multi-agent framework | Early stage |

That’s… fewer than Python. And that’s okay.

Here’s what ellmer looks like in action:

library(ellmer)

chat <- chat_openai()

chat$submit("What's the capital of France?")

#> Paris

Simple. But notice what’s missing: no built-in agent loop, no tool orchestration, no memory management. You’re building the car while driving it.

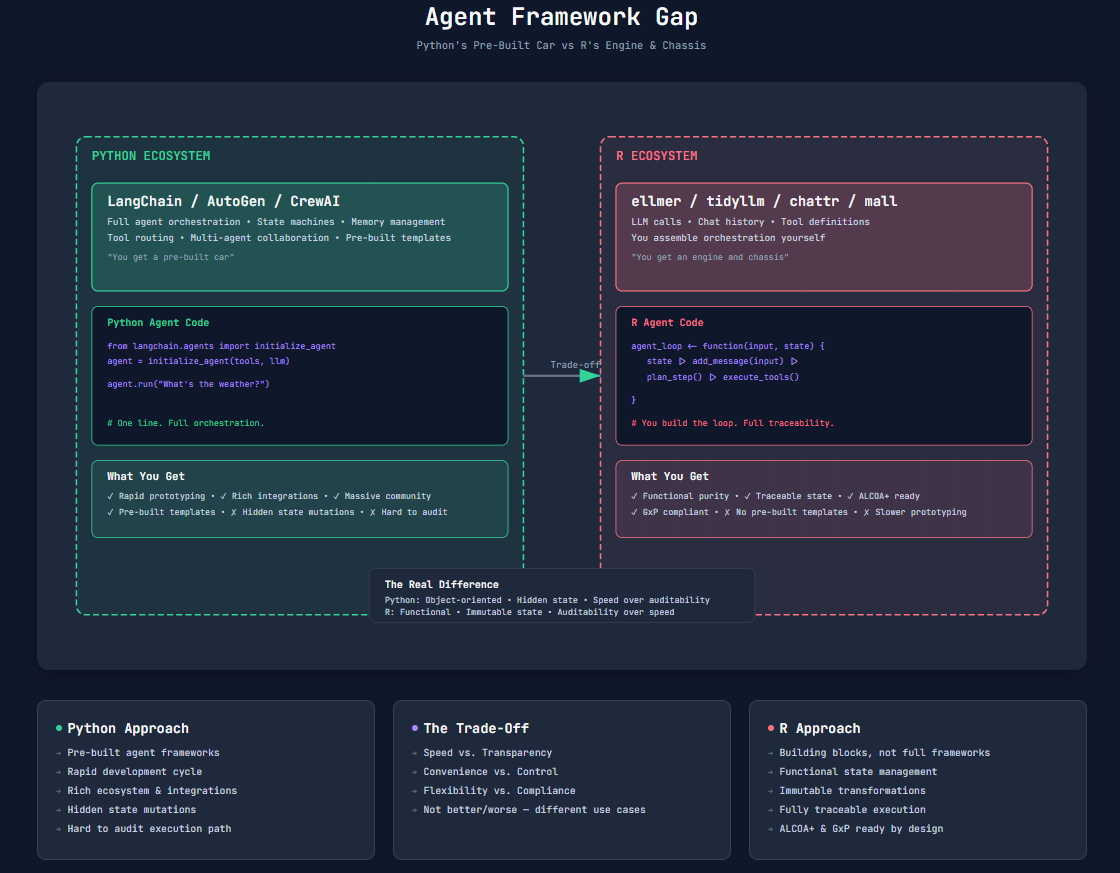

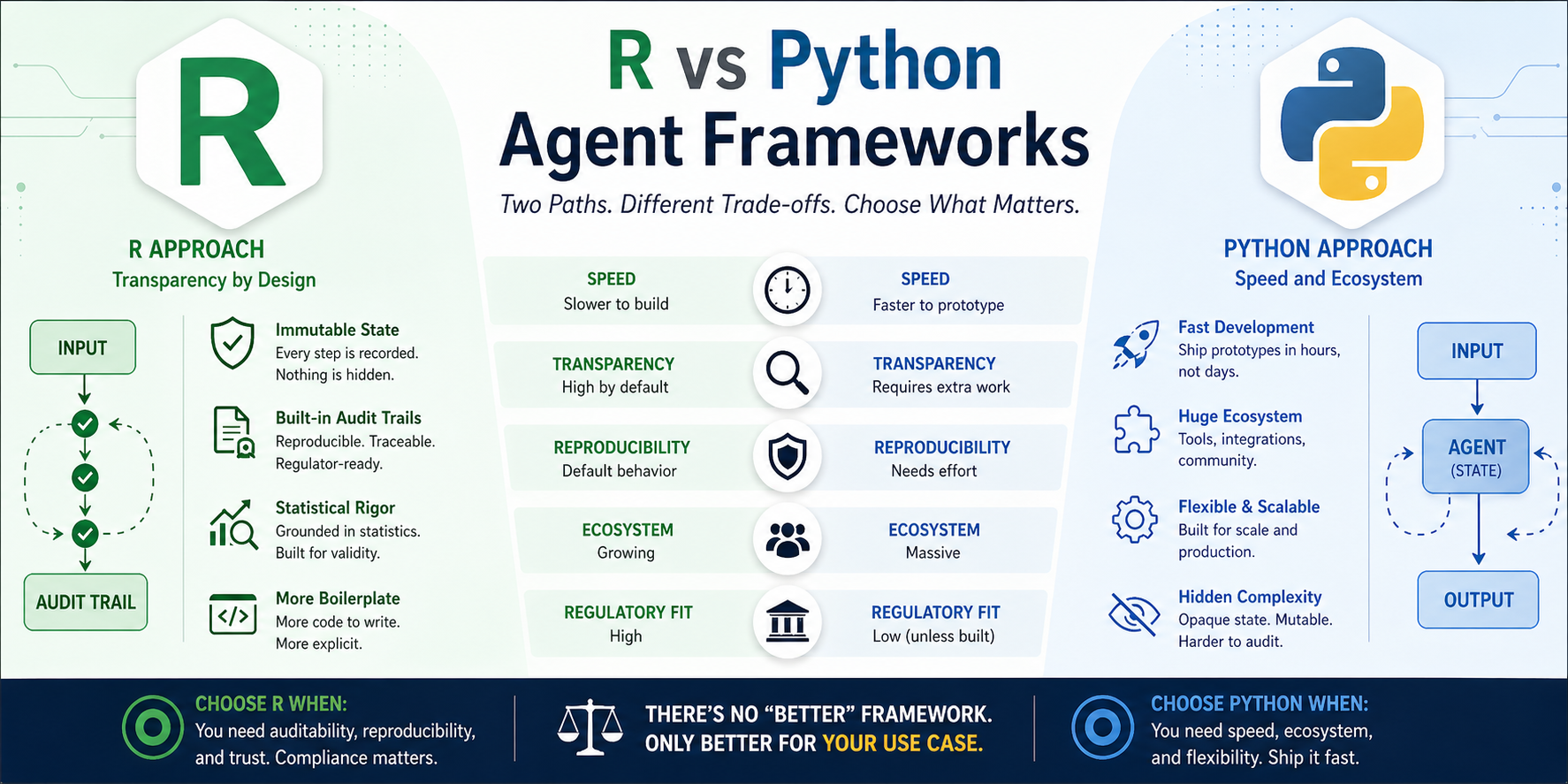

The Real Difference

The gap isn’t just about quantity. It’s about approach. Python frameworks give you full agent orchestration out of the box — state machines, memory management, tool routing, multi-agent collaboration. You get a pre-built car. R packages give you building blocks. You assemble the orchestration yourself. You get an engine, wheels, and a chassis. Both get you to the destination — but one requires more assembly.

Python gives you a pre-built car. R gives you an engine and chassis.

Why This Gap Exists

This isn’t an accident. Three things are happening:

1. Ecosystem Momentum

Python owns ML/AI. TensorFlow, PyTorch, scikit-learn — the entire stack is Python-first. When agent frameworks emerged, they naturally built on Python. Python open-source ecosystem is gigantic. You will find open-source alternatives to all managed offerings. R owns statistics. It’s the language of clinical trials, biostatistics, and regulatory submissions. The AI tools came later.

2. Architectural Mismatch

This is the interesting part. Python’s object-oriented design fits agent patterns naturally:

class Agent:

def __init__(self):

self.memory = []

self.tools = []

def run(self, input):

self.memory.append(input)

return self._execute()

State lives inside the object. Methods mutate that state. It’s clean, intuitive, and… hard to audit. R’s functional approach is different — state flows through functions, each returning a new state. No hidden mutations. But that requires different thinking, and it slows down framework development.

3. Industry Pressure

Tech companies build Python tools. They need agents for chatbots, content generation, automation. Speed matters more than auditability. We record traces as a part of matured harness in Python. Pharma uses R. They need agents for clinical trial analysis, safety reporting, regulatory submissions. Auditability matters more than speed. The market speaks. Python gets frameworks because there’s more demand. R waits because the use cases are narrower (and more regulated).

The Hidden Trade-Off

Here’s where it gets interesting. Every choice has a cost.

What Python gains:

- Rapid prototyping

- Rich tooling and integrations

- Massive community support

- Pre-built templates for common patterns

What Python loses:

- Auditability (state mutations are hidden)

- Immutability (things change under the hood)

- Reproducibility (hard to trace exact execution path) That said, we have options to include analytics, record traces, opentelemetry to fill the gap.

What R gains:

- Functional purity (no hidden side effects)

- Traceable state (everything flows through functions)

- Native compliance (ALCOA+, GxP, FDA-ready)

What R loses:

- Quick-start agent templates

- Pre-built integrations

- Community momentum

The punchline? The gap isn’t accidental — it’s a design choice.

What’s Possible Today

So what can you actually build in R right now?

With ellmer and some functional glue code, you can:

# Simple agent loop

agent_loop <- function(input, state) {

state |>

add_message(input, timestamp = Sys.time()) |>

plan_step() |>

execute_tools() |>

update_state(timestamp = Sys.time())

}

# Usage

initial_state <- list(history = list(), results = list())

final_state <- agent_loop("Analyze this clinical trial data", initial_state)

# Full audit trail, by design

audit_trail <- final_state$history

It’s not LangChain. But it’s transparent. Every step is traceable. Every state change is logged. For pharma, finance, healthcare — that’s not a bug. It’s a feature.

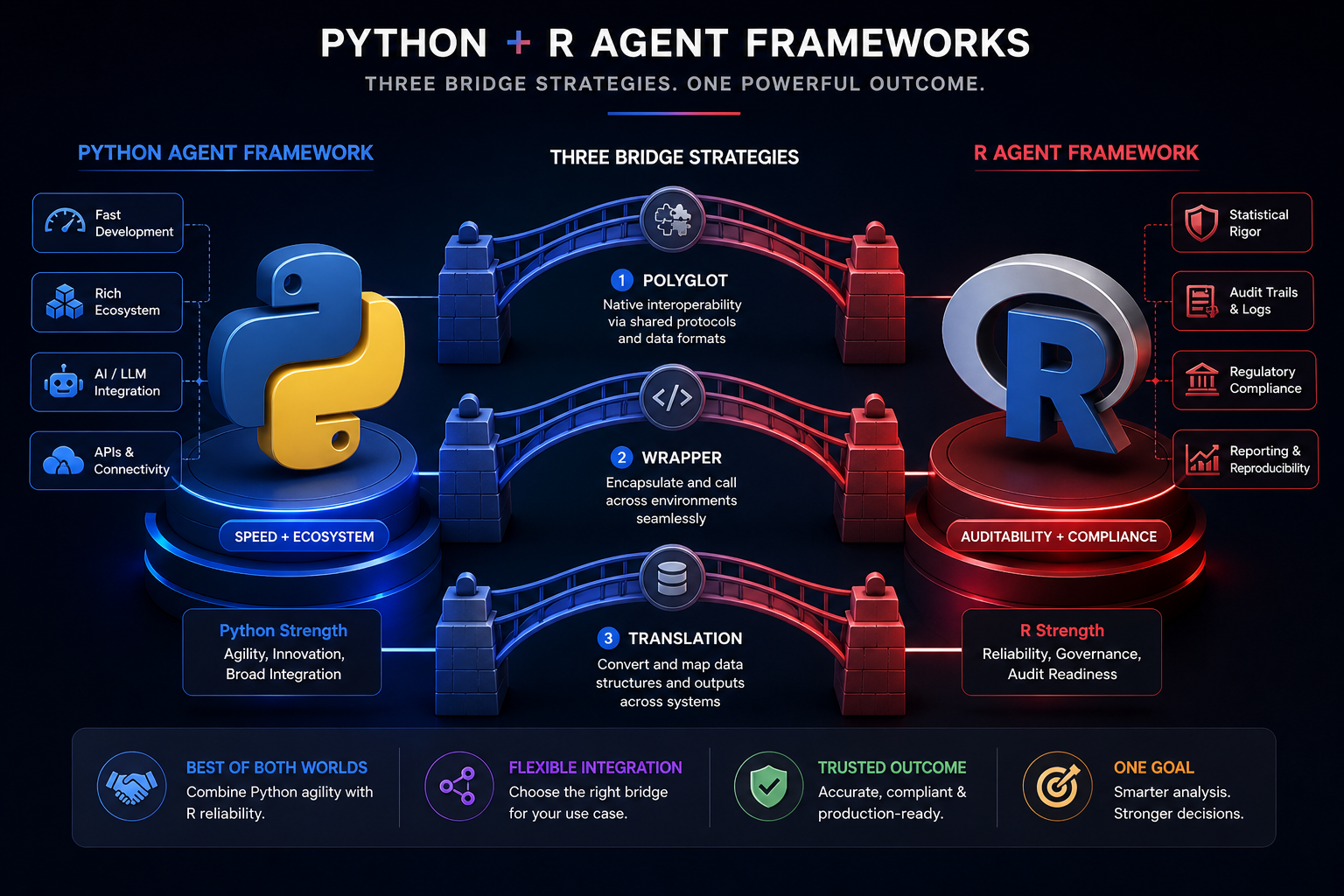

Bridging the Gap

The good news? The gap is closing.

New packages are emerging:

TheOpenAIR— OpenAI-compatible agent interfaceair— Agent infrastructure for R- Cross-language bridges (Python-R MCP servers)

And here’s the kicker: the coming wave of AI regulation (EU AI Act, FDA guidelines on explainable AI) might actually make R’s approach more valuable, not less. When regulators ask “how did your AI make that decision?”, you want an answer. Not “the agent decided.” You want “here’s the exact function, the exact state, the exact tool call.” That’s R’s superpower.

The Real Question

So here’s what I want you to think about:

Do we need Python-style agents in R? Or do we need R-style agents that happen to work?

- If you’re building a consumer chatbot? Python’s frameworks are the right choice. Ship fast.

- If you’re building an agent for clinical trials, safety reporting, or regulatory submissions? R’s functional approach might be exactly what you need.

The gap is real. But it’s not necessarily a problem — it’s a trade-off. And understanding that trade-off is the first step to building the right tool for your use case.

What’s Next?

I’m writing a deeper dive on this, covering:

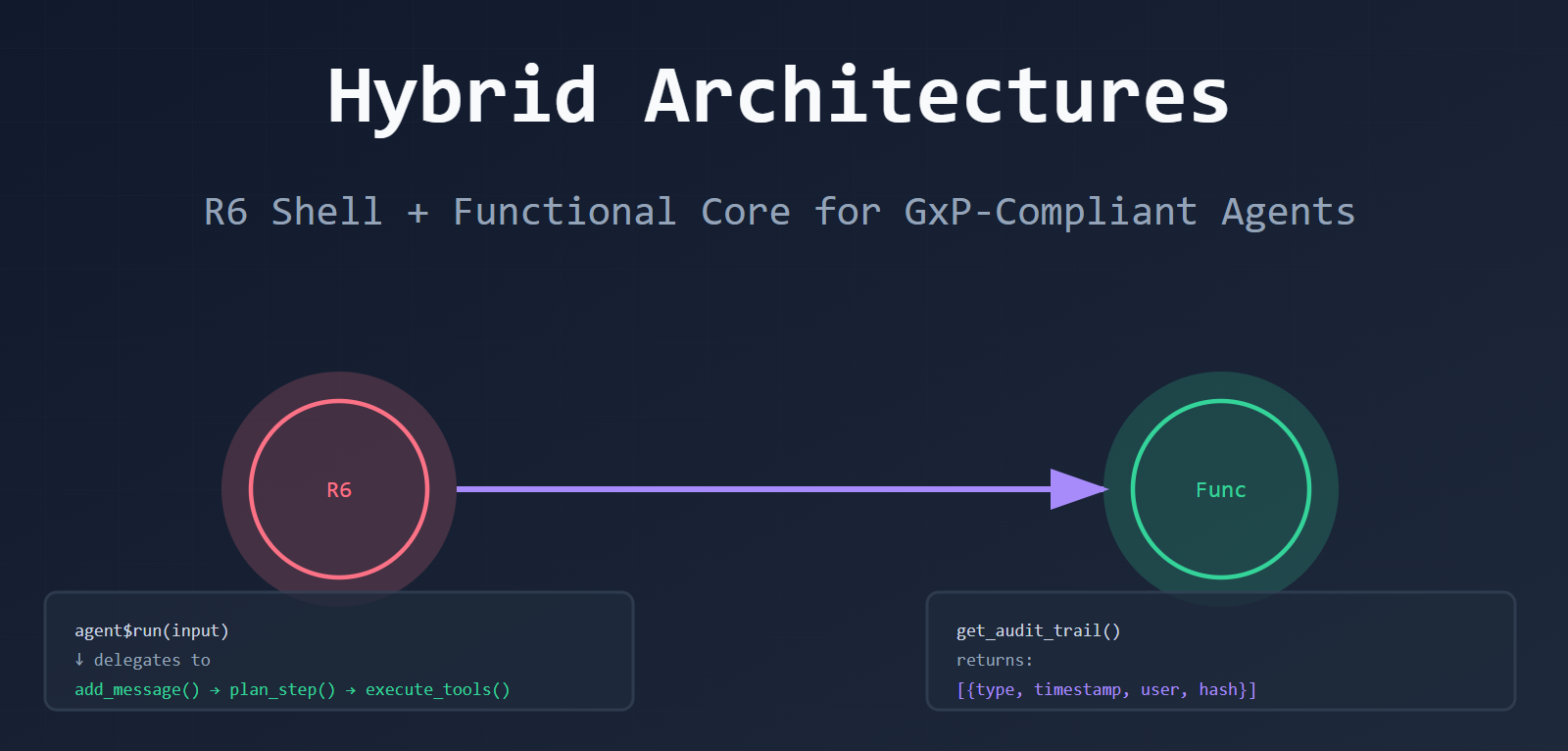

- Hybrid architectures (R6 + functional core)

- ALCOA+ compliance patterns

- Real-world clinical trial agent examples

If this topic interests you, drop a comment. What agent use cases are you building? What’s holding you back?

Thanks, Ashish

Leave a comment